Why This Keeps Happening Even When Everything “Looks Fine”

Preventing SD card corruption on Raspberry Pi systems starts with facing an annoying truth. These failures rarely come from heavy workloads or bad coding habits. They show up after weeks of calm uptime, right when confidence is highest. Filesystems suddenly flip to read-only. Devices refuse to boot. Logs vanish. Reimaging feels like the only move left.

Some of that damage happens before Linux even starts, when the bootloader or EEPROM writes firmware changes without enough power margin to finish cleanly. That is why corruption can feel random, even when applications never changed.

This behavior is baked into how Raspberry Pi boards interact with flash storage, power delivery, and Linux write patterns. The Raspberry Pi Foundation never designed these boards for the same storage tolerance as servers. SD cards handle voltage drops, metadata writes, and shutdowns poorly, especially in unattended systems.

Understanding what actually breaks first changes how corruption is prevented, not just delayed.

Key Takeaways

- SD card corruption is driven by write exposure plus power instability

- Light workloads still fail due to metadata writes

- Defaults favor disks, not flash cards

- Prevention means reducing writes, not chasing perfect power

- Recovery planning matters more than card quality

Context and Real-World Symptoms You Actually See

What Corruption Looks Like Before the System Fully Breaks

Preventing SD card corruption on Raspberry Pi systems gets easier once the early warning signs stop being ignored. Most setups do not fail loudly. They degrade quietly, then collapse all at once. One reboot works. The next one hangs. Services start timing out. Then the board drops into emergency mode like it has had enough of your nonsense.

Common symptoms show up in clusters:

- Boot delays that stretch longer each restart

- Filesystems switching to read-only without explanation

- Logs stopping mid-sentence

- Package updates failing with I/O errors

- SSH still responding while applications silently die

In headless systems, this often looks like a network issue at first. People blame Wi-Fi, cables, or routers. Meanwhile, the SD card controller is already choking on metadata writes it cannot safely complete.

The frustrating part is timing. These failures usually appear after stable uptime, not during stress tests. That is why so many users swear nothing changed. Technically, they are right. The environment did.

Why Raspberry Pi Boards Are Especially Hard on SD Cards

The Hardware and Software Stack Works Against Flash Storage

Preventing SD card corruption on Raspberry Pi systems means accepting that these boards stress flash storage in ways most people never see coming. This is not about raw performance. It is about how often the system writes tiny bits of data, when it writes them, and what happens when power wobbles at the wrong moment.

A Raspberry Pi boots from an SD card, runs the operating system on it, logs to it, swaps memory to it, and updates packages on it. All of that happens while running on a low-cost power design that reacts poorly to voltage drops. SD cards were built for cameras and phones, not always-on computers that write metadata all day long.

A few factors stack up fast:

- Linux writes far more often than most users realize

- ext4 updates metadata even when files do not change

- Background services flush buffers regularly

- Swap activity can spike without warning

- SD card controllers have limited power-loss protection

None of this breaks a card immediately. Instead, it wears cells unevenly and leaves the filesystem vulnerable during routine operations. One brief brownout during a metadata update is enough to flip the card into a corrupted state.

Why Light Workloads Still Fail

This is where people get frustrated. The Pi might only run a dashboard, a sensor, or a single service. CPU usage stays low. Disk usage looks fine. That does not matter. The damage comes from small, repeated writes over time, not big file transfers.

The SD card controller decides where data lands using its flash translation layer, not the operating system. When power dips mid-decision, the block device may still respond, but the map it depends on does not. That is why directories and system files break first, even when application data looks untouched.

Even an idle system writes timestamps, journal entries, and housekeeping data. SD cards hate that pattern. The board is not misbehaving. It is doing exactly what Linux does everywhere else. The difference is that servers use storage designed to survive it.

Power Loss Triggers Corruption, But It Is Not the Root Cause

Why the Blame Always Lands on the Plug

Preventing SD card corruption on Raspberry Pi systems usually turns into a power supply argument within five minutes. And yes, power loss matters. It just does not matter in the way most people think.

Raspberry Pi boards are sensitive to voltage drops. Even brief brownouts can interrupt a write already in progress. The board’s power management IC can detect undervoltage, but it cannot stop a write once cable resistance or a load spike pulls the power rail down. SD cards do not handle partial writes well, especially when metadata is involved.

Here is the part that surprises people. Many corruption events happen during normal operation, not shutdowns. Logging, package updates, time sync, and background flushes all write metadata constantly. A momentary voltage dip during one of those writes is enough to leave the filesystem inconsistent.

Why “Just Shut It Down Properly” Falls Apart

Proper shutdown advice assumes control. In real deployments, control is the first thing lost. Power flickers. Cables get bumped. Batteries drain. Remote sites reboot without warning. Telling an unattended system to behave politely during power loss ignores reality.

The root problem is exposure. The more often the system writes, the more chances there are for power instability to collide with a critical operation. Fixing corruption means reducing those collision points, not pretending power will always behave.

Filesystems and Mount Options That Quietly Make Things Worse

Defaults Assume Spinning Disks, Not Flash Cards

Preventing SD card corruption on Raspberry Pi systems gets harder when default filesystem behavior goes unquestioned. Most Raspberry Pi installs use ext4 with standard mount options. That setup works great on hard drives and SSDs. On SD cards, it quietly increases risk.

The ext4 filesystem uses journaling to protect metadata. Journaling means extra writes, and extra writes mean more chances for failure during power instability. Even reads can trigger metadata updates unless access times are disabled. Something as small as access time tracking, which noatime prevents, can turn routine reads into constant write activity.

A few defaults cause trouble fast:

- Access time updates trigger writes on reads

- Journaling commits happen on a fixed schedule

- Metadata updates cluster around directories

- Background flushes run even at idle

None of these are bugs. They are normal Linux behavior. The problem is volume and timing, not correctness.

Mount Choices That Increase Exposure

Every write is a liability window. Some mount options widen that window without adding value on Raspberry Pi systems. Delayed allocation improves performance on disks but increases damage when power drops mid-operation, before the filesystem finishes mapping data.

This is why corruption often hits directories and system files first. The data itself may still exist. The map pointing to it does not. Tools like fsck repair the aftermath, not the conditions that caused it.

How to Confirm the Real Cause of SD Card Corruption

Stop Guessing and Start Verifying

Preventing SD card corruption on Raspberry Pi systems stalls when people treat every failure the same. Reimaging hides evidence. Swapping cards resets the clock.

The kernel ring buffer usually tells the story first. Even if journald stops writing or logs disappear entirely, dmesg already captured the voltage drop or I/O error that started the chain reaction. Run this before the card fully collapses:

dmesg | grep -iE "voltage|i/o error|ext4|mmcblk|reset" | tail -30A system heading toward failure typically shows output like this:

[ 142.884321] Under-voltage detected! (0x00050005)

[ 143.001204] mmcblk0: error -110 transferring data, sector 2048, nr 8

[ 143.001351] end_request: I/O error, dev mmcblk0, sector 2048

[ 144.223891] EXT4-fs error (device mmcblk0p2): bad block bitmap checksum

[ 145.009144] EXT4-fs (mmcblk0p2): Remounting filesystem read-onlyIf you see mmcblk0 errors paired with undervoltage warnings in the same window, power instability during a write is almost certainly the root cause — not a failing card.

You can also check current write activity and journal pressure before things go wrong:

# See what is writing to the SD card right now

iostat -x mmcblk0 2 5

# Check journal size on disk

journalctl --disk-usageWatch for patterns like:

- Undervoltage warnings tied to load changes

- Repeated ext4 recovery messages on boot

- Buffer I/O errors on specific blocks

- Filesystems remounting as read-only

Distinguishing Wear From Power Problems

Not all corruption has the same fingerprint. Worn cards fail differently than power-triggered damage.

| Symptom | Likely Cause |

|---|---|

| Random blocks unreadable | Flash wear |

| Metadata errors after reboot | Power interruption |

| Corruption after updates | Write timing issue |

| Temporary recovery after fsck | Power-related inconsistency |

If reformatting fixes the issue briefly, the card is not healed. It was never the root problem.

Why “It Works After Reimage” Proves Nothing

A clean image reduces write pressure and resets metadata. That masks the failure mode. The same environment that caused the first corruption is still waiting. Without changes, the next failure is already scheduled.

Common Advice That Sounds Smart but Fails in Practice

Why the Usual Fixes Keep Letting You Down

Preventing SD card corruption on Raspberry Pi systems often turns into advice roulette. Forums repeat fixes because they sound reasonable, not because they survive long-running use.

“Just Buy a Better SD Card” — Buying a better SD card delays failure but does not change write behavior or power instability. Filesystems like f2fs, overlayfs, or squashfs help in narrow cases, but they do not eliminate unstable power or sustained write pressure.

“Add a UPS and You’re Done” — A battery helps with hard power cuts. It does nothing for brownouts, cable resistance, or transient voltage drops under load. Many corruption events happen while the system is still running, not shutting down.

“Switch Everything to Read-Only” — This advice skips the hard part. Services still need write paths. Logs still go somewhere. Updates still happen. Without a clear plan for volatile data, systems either break quietly or lose diagnostics when things go wrong.

“Disable Logging” — Disabling logs reduces writes but removes evidence. Switching everything to read-only without defined write paths just trades corruption for silent breakage.

“It’s Fine, I’ve Pulled Power for Years” — Survivorship bias is real. Some cards get lucky. Others fail early. Recommending luck as a strategy does not scale.

Practical Mitigations That Actually Reduce Corruption Risk

Reducing Write Exposure Instead of Chasing Perfect Power

Preventing SD card corruption on Raspberry Pi systems finally gets traction when the focus shifts away from miracle fixes and toward exposure control. The goal is not perfect power or perfect cards. It is fewer writes, fewer risky moments, and fewer chances for power instability to collide with storage at the wrong time.

Memory pressure is one of the most common triggers people miss. When RAM runs tight, the kernel pushes data into swap without warning. A swapfile can suddenly hammer the SD card with write bursts right when the system is already unstable. That spike often happens during routine background activity, not heavy load, which is why corruption shows up on light workloads. Using zram changes that failure pattern by keeping swap activity in memory instead of on flash.

Move Volatile Writes Out of the Way

Not all data deserves permanent storage. Temporary files, caches, and runtime state change constantly and do not need to survive a reboot. Letting those writes hit the SD card adds wear with no benefit. Common candidates to relocate include temporary directories, application caches, and runtime sockets and PID files. Placing these in memory-backed locations reduces write amplification immediately.

Reduce Write Frequency Without Breaking Services

Many services write far more often than they need to. Timers, sync intervals, and housekeeping jobs default to conservative settings meant for servers with robust block devices and power protection. On Raspberry Pi systems, that behavior increases exposure. Slowing write intervals reduces pressure without breaking functionality. Fewer flushes mean fewer metadata updates and fewer chances for writes to be interrupted by voltage dips.

Isolate Logs Instead of Killing Them

Logs are useful right up until they destroy the storage they live on. The fix is not silence. It is isolation. System services still need diagnostics, but not every log needs to be persistent. Separating high-churn logs from critical system records reduces constant write pressure while keeping enough visibility to troubleshoot failures when they happen.

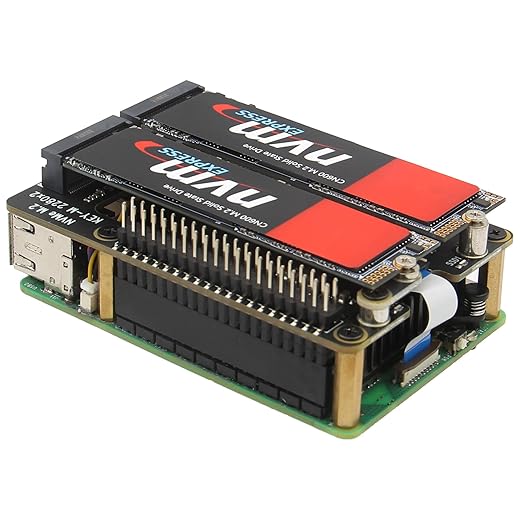

External Storage Changes the Equation

Moving the root filesystem or heavy-write paths to USB storage shifts the risk profile significantly. Even modest USB SSDs handle write amplification, controller caching, and brief power interruptions better than SD cards. This does not make the system immune to failure. It makes failures less frequent, less destructive, and easier to recover from. At that point, corruption becomes manageable instead of inevitable.

How to Apply These Mitigations

Each mitigation below can be applied independently. Start with noatime and zram — they take five minutes and help every setup regardless of workload.

noatime and commit interval (fstab):

# Open fstab

sudo nano /etc/fstab

# Find your root partition line and add mount options

# Before:

PARTUUID=xxxxxxxx-02 / ext4 defaults 0 1

# After:

PARTUUID=xxxxxxxx-02 / ext4 defaults,noatime,commit=300 0 1

# Verify after reboot

mount | grep "on / "zram (swap in RAM):

sudo apt install zram-tools -y

# Set swap size to 50% of RAM

sudo nano /etc/default/zramswap

# Set: PERCENT=50

sudo systemctl enable zramswap

sudo systemctl start zramswap

# Disable the default swapfile

sudo dphys-swapfile swapoff

sudo systemctl disable dphys-swapfile

# Verify

zramctltmpfs for volatile paths (fstab):

# Add to /etc/fstab

tmpfs /tmp tmpfs defaults,noatime,nosuid,size=100m 0 0

tmpfs /var/tmp tmpfs defaults,noatime,nosuid,size=30m 0 0

tmpfs /var/log tmpfs defaults,noatime,nosuid,mode=0755 0 0Putting /var/log on tmpfs means logs do not survive reboots. If you need persistent logs for debugging, redirect only high-churn services to tmpfs and keep journald writing to a separate persistent partition.

Read-Only Root Filesystems and the Tradeoffs Nobody Mentions

Why This Works and Why It Still Breaks Things

Preventing SD card corruption on Raspberry Pi systems often leads people to read-only root filesystems. On paper, it sounds perfect. If the root filesystem never writes, corruption should disappear. In practice, it only moves the problem unless the rest of the system is designed around that constraint.

Making the root filesystem read-only does reduce risk. It removes constant metadata churn from the operating system itself. That alone eliminates a large class of failure windows caused by background writes, package management, and routine housekeeping. For fixed-purpose devices, this can be a meaningful improvement.

The trouble starts when people assume read-only means write-free.

What Still Writes Even When Root Is Read-Only

A read-only root filesystem does not stop the system from needing write paths. Several subsystems still expect somewhere to put data:

- Logs from system services

- Runtime state and lock files

- Temporary files and sockets

- Application data that changes over time

- Update metadata during maintenance windows

If these writes are not redirected deliberately, services fail in ways that are easy to miss. Some crash loudly. Others hang quietly. systemd may keep restarting units without leaving usable logs behind. From the outside, the system looks unstable, even though the filesystem is technically protected.

Without clearly defined write paths, read-only setups trade SD card corruption for silent service failures and missing diagnostics.

Operational Pain Points People Discover Late

Read-only systems add friction to routine maintenance. Updates require remounting filesystems, coordinating reboots, and ensuring nothing writes at the wrong moment. Firmware updates, configuration changes, and recovery procedures all become more rigid. These setups work best when the workload is fixed and predictable, writes are limited to known locations, updates are infrequent and planned, and recovery paths are tested rather than assumed.

Without that discipline, read-only root filesystems reduce one failure mode while introducing others that are harder to diagnose remotely. Used intentionally, they are a solid tool. Used casually, they create brittle systems that fail quietly instead of loudly.

Designing Long-Running Deployments That Assume Failure

Because Hoping for Perfect Uptime Is How Systems Die Quietly

Preventing SD card corruption on Raspberry Pi systems gets realistic when failure is treated as normal, not exceptional. Long-running deployments do not fail because engineers are careless. They fail because time, power, and storage always win eventually. The mistake is designing systems that panic when storage blinks.

Accept That Corruption Is a “When,” Not an “If”

Flash storage wears. Power fluctuates. Filesystems take hits. Designing for perfection assumes none of that happens. Designing for failure assumes it will and plans around it. That mindset changes priorities fast:

- Recovery matters more than prevention alone

- Redeploy speed beats fragile tuning

- Monitoring early signals matters more than uptime bragging

Teams that treat devices as immutable infrastructure rely on a golden image and fast provisioning instead of hoping a card survives forever. A watchdog timer restarting a clean system beats nursing a corrupted one remotely.

Build for Fast Recovery, Not Delicate Survival

A resilient Raspberry Pi deployment can lose its SD card and still recover without human intervention. That means automated provisioning from known-good images, configuration stored off-device or regenerated on boot, services that tolerate reboot cycles, and minimal state stored locally. When corruption happens, the system does not limp. It resets cleanly.

Watch for Early Warning Signs

Systems rarely die without warning. Subtle symptoms appear first:

- Rising reboot frequency

- Filesystems remounting read-only

- Increasing I/O latency

- Kernel voltage warnings

Catching these early lets systems be serviced before total failure.

Know When to Walk Away From SD Cards

At some scale or duty cycle, SD cards stop making sense. USB SSDs, network boot, or small x86 systems cost more up front but save time and frustration later. Reliability is a design choice, not a tweak.

When Raspberry Pi Is the Wrong Tool Entirely

Hard Truths That Save Time and Money

Preventing SD card corruption on Raspberry Pi systems sometimes means admitting the board is being asked to do a job it was never meant to handle. This is not a failure of skill. It is a mismatch between hardware limits and workload expectations.

Raspberry Pi struggles when:

- Writes are constant and unavoidable

- Power quality cannot be controlled

- Physical access is limited or nonexistent

- Recovery must be hands-off and fast

Industrial environments, remote monitoring, and always-on services expose every weakness SD-based boot systems have. At that point, tuning feels clever but solves the wrong problem. Switching to USB SSDs, network boot, or small x86 systems often costs less than repeated downtime, reimaging, and lost trust. Reliability is not about squeezing more from the board. It is about choosing the right platform early.

FAQ

Why does SD card corruption happen even when nothing is running?

Background services still write metadata, timestamps, and housekeeping data. Idle does not mean inactive.

Do industrial SD cards completely fix the problem?

They last longer and fail more gracefully, but power loss and filesystem behavior still apply.

Is a UPS enough to stop corruption?

It helps with full outages, not brief voltage drops or load-related brownouts.

Does switching to USB boot eliminate the risk?

It reduces it significantly. USB storage handles writes and power loss better than SD cards.

Is read-only root the safest option?

Only when write paths, updates, and recovery are planned carefully. Otherwise it creates new failures.

Why does fsck say the card is fine, but corruption keeps coming back?

Because fsck repairs filesystem structure, not the conditions that caused the damage. If journald, swap activity, or firmware writes hit unstable power again, corruption returns even though the card tested clean.

What mount options actually reduce SD card writes?

noatime stops the filesystem from writing an access timestamp every time a file is read. commit=300 extends the journal commit interval from 5 seconds to 5 minutes, reducing how often metadata is flushed to disk. Both are safe for most Pi workloads and require only an /etc/fstab change.

How do I know if my SD card is already failing vs. just corrupted?

Run sudo badblocks -v /dev/mmcblk0 on a separate machine after imaging the card. Scattered unreadable blocks across the card suggest wear-out. If the card passes but corruption returns after reimaging, the environment — power or write pressure — is the actual problem.

Does Raspberry Pi OS do anything to protect the SD card by default?

Not much. Recent versions include some overlay filesystem tooling, but it is not enabled by default. The default install uses ext4 with standard mount options, journaling enabled, and swap active — all of which increase write exposure.

Can I use f2fs instead of ext4 to reduce corruption?

f2fs is designed for flash storage and handles write amplification better in theory. In practice, it is less tested on Raspberry Pi, recovery tooling is less mature, and it does not solve power instability. Worth experimenting on non-critical systems, but not a reliable substitute for the mitigations above.

How often should I back up a Raspberry Pi SD card?

For anything you would not want to reimage from scratch, weekly at minimum using dd or a tool like rpi-clone. For critical deployments, treat the device as stateless and store all configuration in version control or a remote config system so reimaging is a five-minute job rather than a recovery crisis.

What is the best SD card brand for Raspberry Pi?

Brand matters less than card class and use case rating. Look for cards rated A1 or A2 rather than standard UHS speed class cards. SanDisk Endurance and Samsung PRO Endurance lines are built for continuous write workloads and fail more gracefully than standard cards — but they still fail. Card quality buys time, not immunity.

Next Steps

If you are ready to act on what is here, start with noatime and zram — they take five minutes and reduce write pressure on every setup regardless of workload. From there:

- For a full USB SSD boot walkthrough, see: Booting Raspberry Pi from USB SSD

- For overlayfs and read-only root setup, see: Raspberry Pi Read-Only Root Filesystem Setup

- For zram configuration details, see: Setting Up zram on Raspberry Pi

If your Pi runs a specific service, the mitigations that matter most depend on your workload. A Pi-hole tolerates tmpfs logs easily. A Vaultwarden instance needs more careful write path planning before you go read-only. When in doubt, USB SSD boot is the most reliable long-term path for anything running unattended.

References

- https://www.raspberrypi.com/documentation/computers/configuration.html#power-supply

- https://www.kernel.org/doc/html/latest/filesystems/ext4/index.html

- https://www.sdcard.org/developers/sd-standard-overview/